Edge vs Cloud AI in Manufacturing: Where Each Actually Belongs

Should we run our AI at the edge or in the cloud?

Most industrial AI deployments are built around the wrong question. "Edge or cloud?" treats a deployment decision as a binary choice, when the real question is simpler and more useful: what does this specific workload actually require?

Edge AI offers real-time inference with no network dependency. Cloud AI offers the depth of compute and data that builds models worth deploying in the first place. Both are genuinely necessary. Neither is universally correct. And the architecture that works is almost always a hierarchy of both, not a choice between them.

This piece looks at where each layer performs, where each fails, and how to place workloads correctly across the hierarchy. The goal is not a verdict. It is a framework for making the decision well, every time.

What Edge AI and Cloud AI Actually Mean on the Plant Floor

Edge AI processes data where it is generated. The model runs locally on a gateway or industrial PC, directly on the factory floor. Data from sensors, cameras, and machines is analyzed on the spot, and the result is immediate.

Cloud AI processes data in a remote data center. Information travels over a network to where the compute lives. The infrastructure scales to meet demand, and the same models can serve every site in an operation.

Both are real approaches to industrial AI, built for different problems and different scales.

Where Edge AI Works Best

Think about what happens in the seconds after a sensor detects something abnormal on a running line. There is no time to package that data, send it to a remote server, wait for a response, and act. The machine has already moved on.

This is the environment edge AI was built for. Manufacturing lines running at speed, assets in remote locations, facilities where network connectivity is inconsistent or restricted. In these situations, the model needs to live close to the source, processing data as it arrives and producing a result before the next event occurs.

Quality inspection is one of the clearest examples. A vision system identifying surface defects, dimensional errors, or assembly faults on a fast-moving line has a window measured in milliseconds. The same logic applies to condition monitoring on rotating equipment, anomaly detection on process instrumentation, and real-time control adjustments based on live sensor feeds.

Edge AI also matters where data cannot easily leave the facility. Regulatory requirements, network constraints, or simple operational practicality all create situations where keeping data local is not optional. Running the model at the edge removes the dependency entirely.

The use cases are varied but the underlying condition is consistent. When speed, proximity, or data locality is non-negotiable, the architecture decision is straightforward.

Where Cloud AI Works Best

Edge AI handles the moment. What it cannot handle is everything that happens before that moment becomes possible.

A single facility sees a fraction of the picture. One site running one set of machines accumulates useful data. But an organization running fifty sites, each generating operational data around the clock, is sitting on something far more valuable. The patterns that predict failure, the process conditions that drive quality, the anomalies that precede downtime. These only become visible when data is brought together at scale.

That is the problem cloud AI was built to solve. Not speed, but depth. Not real-time response, but the kind of learning that takes weeks of data, hundreds of assets, and serious compute to produce. A predictive model that has seen ten thousand failure events across a global fleet will always outperform one trained on a single site. The cloud is where that model gets built.

It is also where industrial organizations manage AI at scale. Retraining models as conditions change, pushing updates across distributed deployments, monitoring performance across every site from a single place. Doing that at the edge, independently, across dozens of locations, is not a realistic proposition.

The workloads that belong in the cloud share a common characteristic. They are not time-critical, but they are data-hungry and compute-intensive. And the value they produce, better models, broader visibility, smarter operations, flows back down to the plant floor where it matters.

The Trade-offs

Every architecture decision in industrial AI comes down to the same five factors. Get them right and the deployment works. Get them wrong and the problems compound quietly until they become expensive.

Latency determines whether cloud is even an option for a given workload. Bandwidth determines what it costs to run it. Data ownership determines what is permissible. Model complexity determines what is technically feasible. And operational overhead determines what the true long-term cost looks like, not just the build cost.

| Factor | Edge | Cloud |

|---|---|---|

| Latency | Real-time, no network dependency | Adds network round-trip, not suitable for time-critical decisions |

| Bandwidth | Data stays local, minimal transfer costs | High volumes must travel, costs and infrastructure scale with data |

| Data Ownership | Stays on site, no external exposure | Requires a deliberate strategy on what leaves and where it lives |

| Model Complexity | Constrained by local hardware | No practical ceiling, runs the largest and most complex models |

| Operational Overhead | Multiplies with every site added | Centralized but dependent on connectivity and third-party uptime |

The factors rarely pull in the same direction. A workload that demands low latency often generates high data volumes, making cloud impractical on two counts at once. A model complex enough to require cloud compute may involve data that cannot leave the facility. These tensions are where most architecture decisions get made, and where getting the balance right matters most.

The Hierarchy and Where Each Workload Belongs

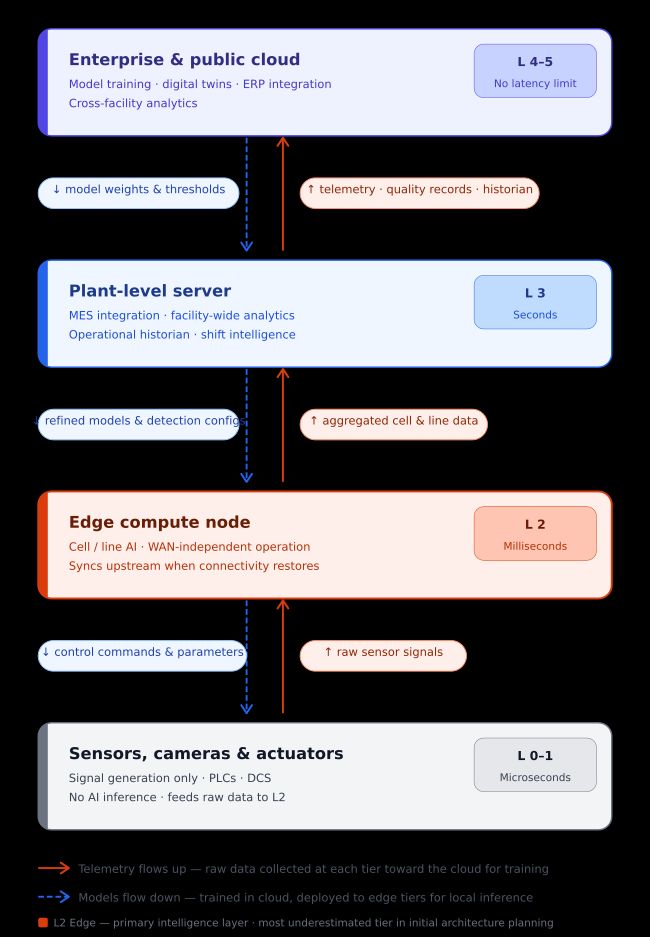

The architecture that resolves the edge-vs-cloud question is a five-level hierarchy, one the manufacturing industry already knows. It maps directly to the ISA-95 functional model that has guided industrial operations for decades.

Each level handles a different class of decisions, at a different speed, with a different scope of data.

- Level 0–1 (On-Device): Inference runs directly on the sensor, camera, or actuator. Models are small and fast, operating in microseconds with no network involved.

- Level 2 (Edge Compute Node): Pulls data from multiple machines and runs more capable models across a production cell or line. Keeps working during network outages and syncs upstream when connectivity returns. This level is the most commonly skipped in early planning and the most expensive to add later.

- Level 3 (Plant-Level Server): Handles facility-wide analytics, MES integration, and the data historian that feeds both local reporting and cloud synchronization.

- Level 4–5 (Enterprise and Cloud): Where models are trained, retrained, and managed across every site. Latency is not a concern here. Compute depth is.

Data flows up through this hierarchy for aggregation and learning. Model updates, detection thresholds, and configurations flow back down. An architecture that flips this, sending time-sensitive decisions to the cloud while the edge just passes data along, will run into the same problems as a cloud-only deployment, regardless of what it is called.

Placing workloads correctly is where most industrial AI projects go wrong. The starting point is always the decision itself: what is the AI being asked to do, and what happens if the answer is late? Three questions settle most cases:

What response time is acceptable? Milliseconds point to Level 0–2. Seconds to minutes allow Level 3. Hours or longer can go to the cloud.

Can the data leave the facility? Regulatory constraints, network limitations, or operational policy may make cloud processing impermissible regardless of latency.

How much context does the model need? Single-asset inference fits at the edge. Cross-site pattern recognition belongs in the cloud.

In most real deployments, the same asset feeds multiple levels simultaneously: a Level 2 anomaly detection model acting in real time, a Level 3 shift performance report running every few hours, and a Level 4–5 fleet-wide predictive model retrained monthly. These are three different problems running at three different speeds, not one problem waiting for one answer.

Conclusion

The edge-vs-cloud question has a straightforward answer once the right question is being asked. Not "where should we run our AI?" but "what does this specific workload require, and which level of the hierarchy delivers it?"

Edge and cloud are not competing philosophies. They are complementary layers of a single architecture that the manufacturing industry already understands. What industrial AI adds is the requirement to place intelligence deliberately at each level, not just data collection at the bottom and reporting at the top, but active inference, model management, and continuous learning distributed across the hierarchy in proportion to where the decisions actually happen.

The technology to do this exists. The frameworks are mature, the hardware is proven, and the use cases are well established. What separates deployments that deliver sustained operational value from the ones that stall after the pilot is not the sophistication of the models. It is the clarity of the architecture and the discipline to build it in the right order.

Define the decision. Map it to the hierarchy. Build the infrastructure that decision requires. Then train the model.

That sequence is what industrial AI at scale actually looks like, and FlowFuse is the platform built to run it. From connecting machines and collecting data, to transforming and visualizing it in real time, to running model inference directly in the flow with ONNX nodes, wiring live plant data into AI agents with MCP, and letting operators query their operations in plain language with Expert Insights. One platform. Every level of the hierarchy.

Run AI at Every Level of Your Operation

FlowFuse connects machines, transforms data, runs model inference with ONNX nodes, and wires live plant data into AI agents. One platform across every level of the hierarchy.

About the Author

Sumit Shinde

Technical Writer

Sumit is a Technical Writer at FlowFuse who helps engineers adopt Node-RED for industrial automation projects. He has authored over 100 articles covering industrial protocols (OPC UA, MQTT, Modbus), Unified Namespace architectures, and practical manufacturing solutions. Through his writing, he makes complex industrial concepts accessible, helping teams connect legacy equipment, build real-time dashboards, and implement Industry 4.0 strategies.